|

|||

Computers & the Internet

I - Internet users M - Moore's Law S - Supercomputers

|

|||

Data

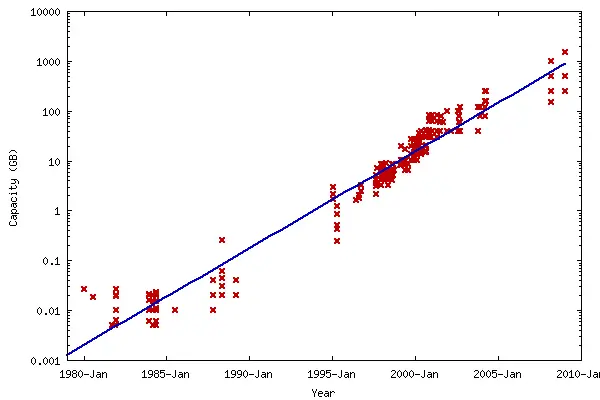

storage In 1956, IBM launched the RAMAC 305 - the first computer with a hard disk drive (HDD). This weighed over a ton and consisted of fifty 24" discs, stacked together in a wardrobe-sized machine. Two independent access arms moved up and down to select a disk, and in and out to select a recording track, all under servo control. The total storage capacity of the RAMAC 305 was 5 million 7-bit characters, or about 4.4 MB.

1962 saw the release of the IBM 1311 - the first storage unit with removable disks. Each "disk pack" held around two million characters of information. Users were able to easily switch files for different applications. Transistor technology - which replaced vacuum tubes - began to substantially reduce the size and cost of computer hardware. The IBM 3330 was introduced in 1970, with removable disk packs that could hold 100 MB. The 1973 model featured disk packs that held 200 MB (pictured here). Access time was 30 ms and data could be transferred at 800 kB/s. Floppy disks arrived in 1971, revolutionising data storage. Although smaller in capacity, they were extremely lightweight and portable. The earliest versions measured 8 inches in diameter. These were followed by 5¼-inch disks in the late 1970s and 3½-inch disks in the mid-1980s.

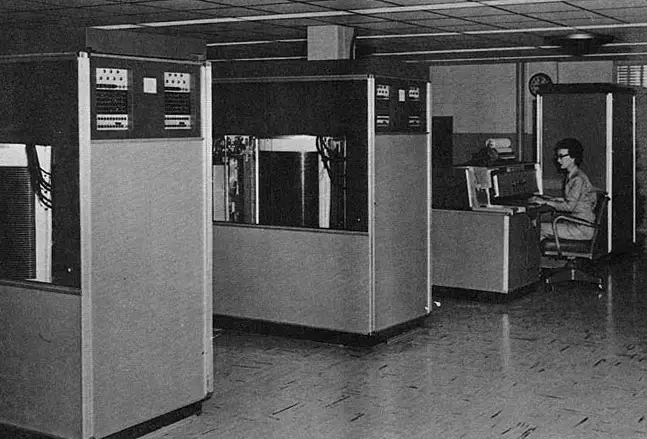

The IBM 3380 was introduced in 1980. This mainframe held eight individual drives, each with a capacity of 2.5 GB (pictured here). The drives featured high-performance "cache" memory and transfer speeds of 3 MB/s. Each cabinet was about the size of a refrigerator and weighed 550 lb (250 kg). The price ranged from $648,000 to $1,136,600. The growth of home computing in the 1980s led to smaller, cheaper, consumer-level disk drives. The first of these was only 5 MB in size. By the end of the decade, however, capacities of 100 MB were common.

Data storage continued to make exponential progress into the 1990s and beyond. Floppy disks were replaced by CD-ROMs, which in turn were replaced by DVD-ROMs, which in turn began to be superseded by the Blu-Ray format. Home PCs with 100 GB hard drives were common by 2005 and 1 terabyte (TB) hard drives were common by 2010.

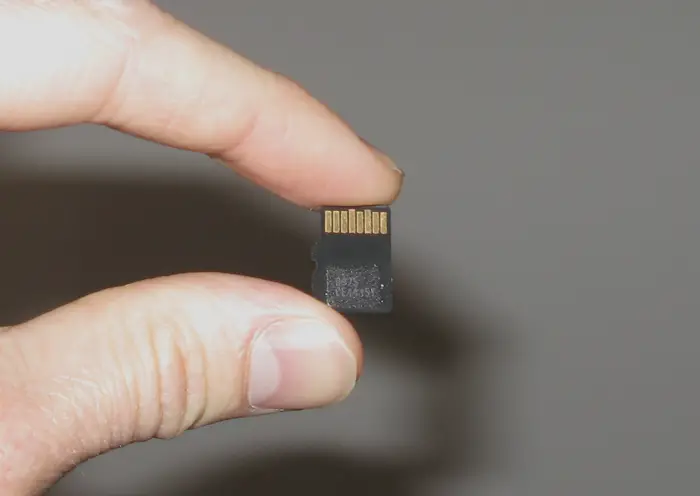

Secure digital (SD) cards arrived in the early 2000s. These provided storage in a thumbnail-sized form factor, enabling them to be used with digital cameras, phones, MP3 players and other handheld devices. Micro-SD cards (pictured below) have shrunk this format to an even smaller size. As of 2010, it is possible to store 32 GB of data on a device measuring 11 x 15 mm, weighing 0.5 grams and costing under $100. To put this in context: this is over 3 million times lighter and over 10,000 times cheaper than an equivalent device of 30 years ago.

So, what does the future hold? It is safe to assume that the exponential trends in capacity and price performance will continue. These trends have been consistent for over half a century. Even if the limits of miniaturisation are reached with current technology, formats will become available that lead to new paradigms and even higher densities. Carbon nanotubes, for example, would enable components to be arranged atom-by-atom. The memory capacity of the human brain has been estimated at between one and ten terabytes, with a most likely value of 3 terabytes.* Consumer hard drives are already available at this size.* 128 GB micro-SD cards are being planned for 2011* and there is even a 2 TB specification in the pipeline.* Well before the end of this decade, it is likely that micro-SD cards (such as that pictured above) will exceed the storage capacity of the human brain. By 2030, a micro-SD card (or equivalent device) will have the storage capacity of 20,000 human brains. By 2043, a micro-SD card (or equivalent device) will have a storage capacity of more than 500 billion gigabytes - equal to the entire contents of the Internet in 2009.* By 2050 - if trends continue - a device the size of a micro-SD card will have storage equivalent to three times the brain capacity of the entire human race.

Internet

users Vast numbers of people in developing countries will gain access to the web, thanks to a combination of plummeting costs and exponential improvements in technology. This will include laptops that can be bought for only a few tens of dollars, together with explosive growth in the use of mobile broadband. Even some of the most remote populations on Earth will gain access to the Internet.*

Moore's

Law The capabilities of many electronic devices are strongly linked to Moore's law: processing speed, memory capacity, sensors and even the pixels in digital cameras. All of these are improving at exponential rates as well. This is dramatically enhancing the impact of digital electronics in nearly every segment of the world economy. In 2011, Intel unveiled a new microprocessor based on 22 nanometre process technology.* Codenamed Ivy Bridge, this is the first high-volume chip to use 3-D transistors, and packs almost 3 billion of them onto a single circuit. These new "Tri-Gate" transistors are a fundamental departure from the two-dimensional "planar" transistor structure that has been used before. They operate at much lower voltage and lower leakage, providing an unprecedented combination of improved performance and energy efficiency. Dramatic innovations across a range of electronics from computers to mobile phones, household appliances and medical devices will now be possible.

Even smaller and denser chips based on a 14nm process are being planned for 2013, and the company's long-term roadmap includes sizes down to 4nm in the early 2020s - close to the size of individual atoms. This will present major design and engineering challenges, since transistors at these dimensions will be substantially affected by quantum tunnelling (a phenomenon where a particle tunnels through a barrier).

From the 2020s onwards, it is possible that carbon nanotubes or a similar technology will reach the mass market, creating a new paradigm that allows Moore's Law to continue.* Chips constructed on an atom-by-atom basis would reach incredible densities. Further into the future, chips may become integrated directly with the brain, combining AI/human intelligence and dramatically enhancing our cognitive and learning abilities. This could allow technologies once considered the stuff of science fiction to become a reality - such as full immersion VR, electronic telepathy and mind uploading. Ultimately, Moore's Law could lead to a "technological singularity" – a point in time when machine intelligence is evolving so rapidly that humans are left far, far behind.*

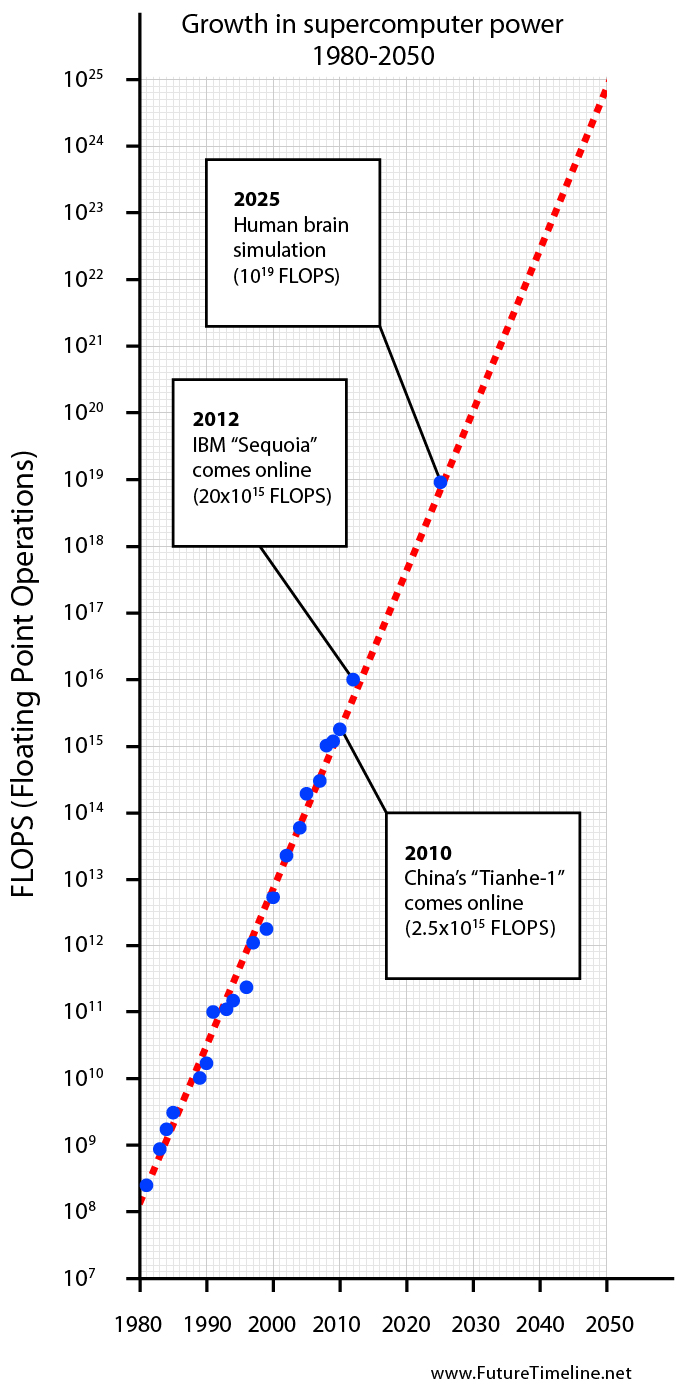

Supercomputers Supercomputers were first developed in the 1960s. They were designed primarily by Seymour Cray at Control Data Corporation, which led the market into the 1970s, until Cray left to form his own company, Cray Research. He then took over the supercomputer market with his new designs, holding the top spot in supercomputing for five years (1985–1990). In the 1980s, a number of smaller competitors entered the market, in parallel to the creation of the "mini-computer" market, but many disappeared in the mid-1990s supercomputer market crash. Today, supercomputers are typically one-of-a-kind custom designs - produced by traditional companies such as Cray, IBM and Hewlett-Packard - who had purchased many of the 1980s companies to gain their experience.

Since October 2010, China has been home to the world's fastest supercomputer. The Tianhe-1A supercomputer, located at the National Supercomputing Center in Tianjin, is capable of 2.5 petaFLOPS; that is, over 2½ quadrillion (two and a half thousand million million) floating point operations per second.* America will take the lead once again in 2012, when IBM's Sequoia supercomputer comes online. This will have a maximum performance of 20 petaFLOPS; nearly an order of magnitude faster than Tianhe-1A.* IBM believes it can achieve exaflop-scale computing by 2019; a thousandfold improvement over machines of 2010.* For decades, the growth of supercomputer power has followed a remarkably smooth and predictable trend, as seen in the graph below. If this exponential trend continues, it is likely that complete simulations of the human brain and all of its neurons will be possible by 2025.* In the early 2030s, supercomputers could reach the zettaflop scale, meaning that weather forecasts will achieve 99% accuracy over a two week period. By the 2050s, supercomputers may be capable of simulating millions - even billions - of human brains simultaneously. In parallel with developments in artificial intelligence and brain-computer interfaces, this could enable the creation of virtual worlds similar in style to the sci-fi movie, The Matrix. It is even possible that we are living in such a simulation at this very moment, without realising it.*

|

||

|

References 1 Human

brain, sizes.com: 2 WD

intros 4TB My Book Studio Edition II external hard drive, engadget.com: 3 SanDisk Developing 128 GB Micro-SD Card For 2011 Release, Physorg: 4 Latest SD card format to reach 2TB of storage, ZDNet: 5 Internet data heads for 500bn gigabytes, The Guardian: 6 10 fool-proof predictions for the Internet in 2020, Network World: 7 Intel

Reinvents Transistors Using New 3-D Structure, Intel: 8 Complex

Integrated Circuits Made of Carbon Nanotubes, Technology Review: 9 Exponential

Growth of Computing, Singularity.com: 10 Top

500 List: 11 20

Petaflop Sequoia Supercomputer, IBM: 12 IBM

breaks petaflop barrier, InfoWorld: 13 The

Singularity is Near, by Ray Kurzweil: 14 Simulated

reality, Wikipedia:

|

||

|

|||

|

|

Follow us » |

|

|

|

|

Latest prediction »

Latest blog »

|

|

|

|

|

Visit our forum »

|

|

|

|

|

|

|

|

|