27th January 2017 AI matches humans on standard visual intelligence test Researchers at Northwestern University have developed an AI system that performs at human levels on a standard visual intelligence test.

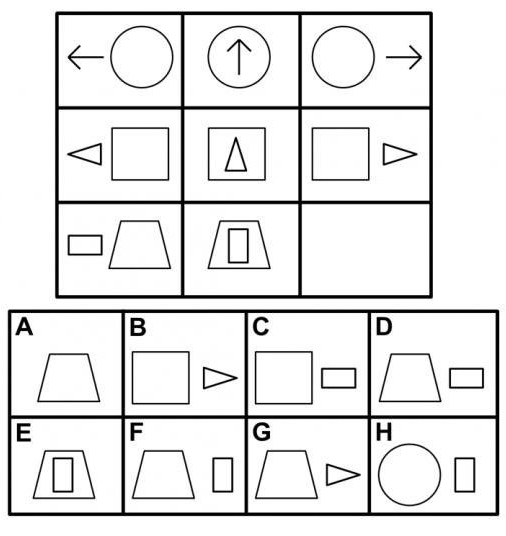

A team at Northwestern University has developed a computational model that performs at human levels on a standard visual intelligence test. This work is an important step toward making artificial intelligence systems that see and understand the world as humans do. "The model performs in the 75th percentile for American adults, making it better than average," said Professor Ken Forbus, who holds a PhD in Artificial Intelligence from MIT. "The problems that are hard for people are also hard for the model, providing additional evidence that its operation is capturing some important properties of human cognition." The ability to solve complex visual problems is one of the hallmarks of human intelligence. Developing artificial intelligence systems that have this ability not only provides new evidence for the importance of symbolic representations and analogy in visual reasoning, but could also potentially shrink the gap between computer and human cognition. The new computational model is built on CogSketch, an AI platform developed in Forbus's laboratory that can solve visual problems and understand sketches by using a process of analogy. He developed the system with Andrew Lovett, a former Northwestern postdoctoral researcher in psychology. Their research is published this month in the journal Psychological Review. While Forbus and Lovett's system can be used to model general visual problem-solving phenomena, they specifically tested it on Raven's Progressive Matrices, a nonverbal standardised test that measures abstract reasoning. All of the test's problems consist of a matrix with one image missing. The test taker is given six to eight choices with which to best complete the matrix. "The Raven's test is the best existing predictor of what psychologists call 'fluid intelligence, or the general ability to think abstractly, reason, identify patterns, solve problems, and discern relationships,'" said Lovett, now a researcher at the US Naval Research Laboratory. "Our results suggest that the ability to flexibly use relational representations, comparing and reinterpreting them, is important for fluid intelligence." The ability to use and understand sophisticated relational representations is a key to higher-order cognition. Relational representations connect entities and ideas such as "the clock is above the door" or "pressure differences cause water to flow." These types of comparisons are crucial for making and understanding analogies, which humans use to solve problems, weigh moral dilemmas, and describe the world around them. "Most artificial intelligence research today concerning vision focuses on recognition, or labelling what is in a scene rather than reasoning about it," Forbus said. "But recognition is only useful if it supports subsequent reasoning. Our research provides an important step toward understanding visual reasoning more broadly." ---

Comments »

|